Production Meteor and Node, Using Docker, Part IV

Smart Cross-Cloud Load Balancing (and Round-Robin DNS Tricks)

Before we get into the details of this exercise, I’d like to call your attention to a feature called Deployment Tags in Docker Cloud. Essentially, Deployment Tags can help you to achieve amazing uptime for your deployments by facilitating a secure, transparent network that connects all your Docker containers.

That means containers on Digital Ocean can be linked to containers on AWS or Microsoft Azure. You can have load balancers on each service, and all of them can balance the same service containers with the same configuration. The complexities of service discovery and networking are abstracted away. It's deceptively easy!

Project Ricochet is a full-service digital agency specializing in Open Source & Docker.

Is there something we can help you or your team out with?

Set up the Node Clusters

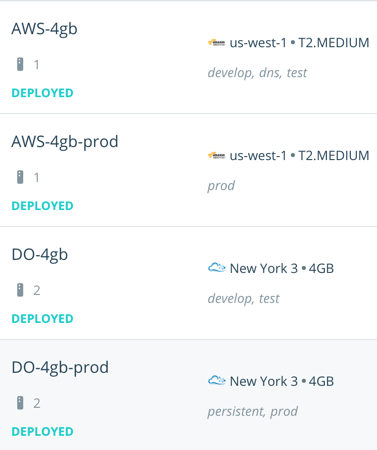

We covered steps on how to create node clusters in Part I. But here you’ll want to ensure you have enough tags to make each server uniquely identifiable. As my team works, we tend to have two nodes on a couple of services. Our Digital Ocean node for dev/test containers has the tags develop, dos, and test, for example.

Our node clusters on Docker Cloud

You don't need a lot of tags. Start simple and add more as it becomes more obvious how you want to distribute your containers.

Configure the Service Tags and Deployment Strategy

To ensure services get deployed evenly on your servers, edit each one and set the Deployment tags to include the fewest tags needed to match all the server tags. Think of it like this; The nodes should always have more tags on them than the services.

Take a look at the cropped screen capture above, for a moment. Notice that if we wanted to deploy to AWS-4gb and DO-4gb, we would use the develop OR test tag.

Also ensure that you set your Deployment Strategy to High Availability. As the documentation details:

A service using the HIGH_AVAILABILITY strategy deploys its containers to the node that matches its deploy tags with the fewest containers of that service at the time of each container’s deployment. This means that the containers will be spread across all nodes that match the deploy tags for the service.

Set up DNS

The last thing to do is to configure your DNS to point at your node cluster servers. The exact approach will vary depending on your DNS provider, but should be something like this:

- Docker Cloud itself sets up DNS for you. So you can just point CNAME records at the Service Endpoint of your choice. If your service endpoint is http://haproxy-dev.ab123456.svc.dockerapp.io:80, then you can set up a CNAME record pointing at haproxy-dev.ab123456.svc.dockerapp.io.

- The domain names are those specified in the VIRTUAL_HOST environment variable on services that are linked to the load balancers. You can point each one at the same CNAME — the load balancers will figure out what apps to use from the configuration.

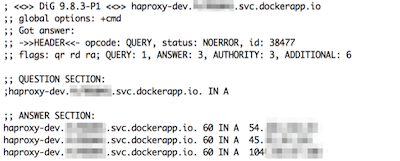

If you want to see how the DNS resolution looks, you can use dig or nslookup in your terminal on the subdomain I suggested you set up as a CNAME. Something like this:

$ dig haproxy-dev.ab123456.svc.dockerapp.io

Notice there are multiple results in the answer section. Docker Cloud takes care of the hard work for us. Instead of pointing multiple A records at each node in each node cluster, we can just point a CNAME record at the service and Docker Cloud will return all IPs that have load balancer containers on them. The browser will try these and cache the first one that works. Most browsers will immediately try another one if the connection is refused. This is what happens if no service is listening — essentially letting you load-balance your load balancers!

That wasn’t so bad, was it?

Well, stay tuned. We’ve got another fun installment coming soon. Over the next few posts, we’ll cover:

- SSL

- MongoDB Replication using volumes

- Backups (not boring anymore!)

Click here for the next installment in the series.

Click here for the previous installment in the series.

Curious about how much it might cost to get help from an agency that specializes in Docker?

We're happy to provide a free estimate!